Wafer uses autonomous AI agents to profile and optimize GPU inference performance across production stacks.

Features

- AI-agent-driven inference diagnostics

- Full-stack optimization from kernels to models

- Improved GPU inference throughput and latency

- Fast bottleneck path identification

- Fits continuous optimization workflows for engineering teams

- Built for production inference deployment

Use Cases

- Pre-launch performance testing and tuning

- Cost control for online inference services

- Latency optimization under high concurrency

- Higher GPU resource utilization

- Productivity gains for inference platform teams

- Performance tuning for LLM and multi-model systems

FAQ

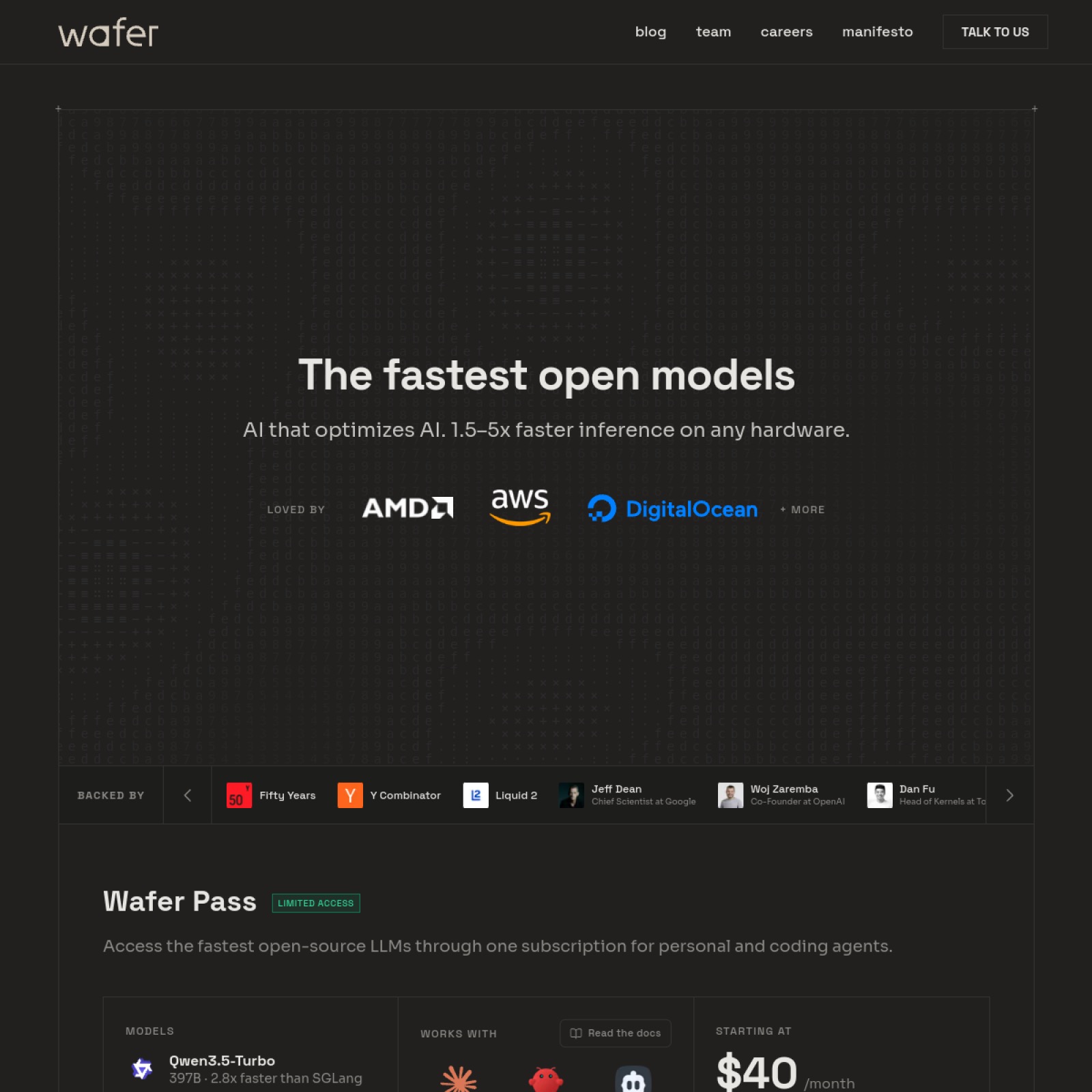

Wafer provides AI-agent-driven optimization for inference systems, analyzing and improving performance across the GPU stack so teams can find bottlenecks faster and ship high-performance model serving. Core capabilities include: AI-agent-driven inference diagnostics, Full-stack optimization from kernels to models, Improved GPU inference throughput and latency.

Common scenarios include: Pre-launch performance testing and tuning, Cost control for online inference services, Latency optimization under high concurrency.