Simple, open-source LLM tracing for AI agents. Track prompts, completions, latency, token usage, and cost.

Features

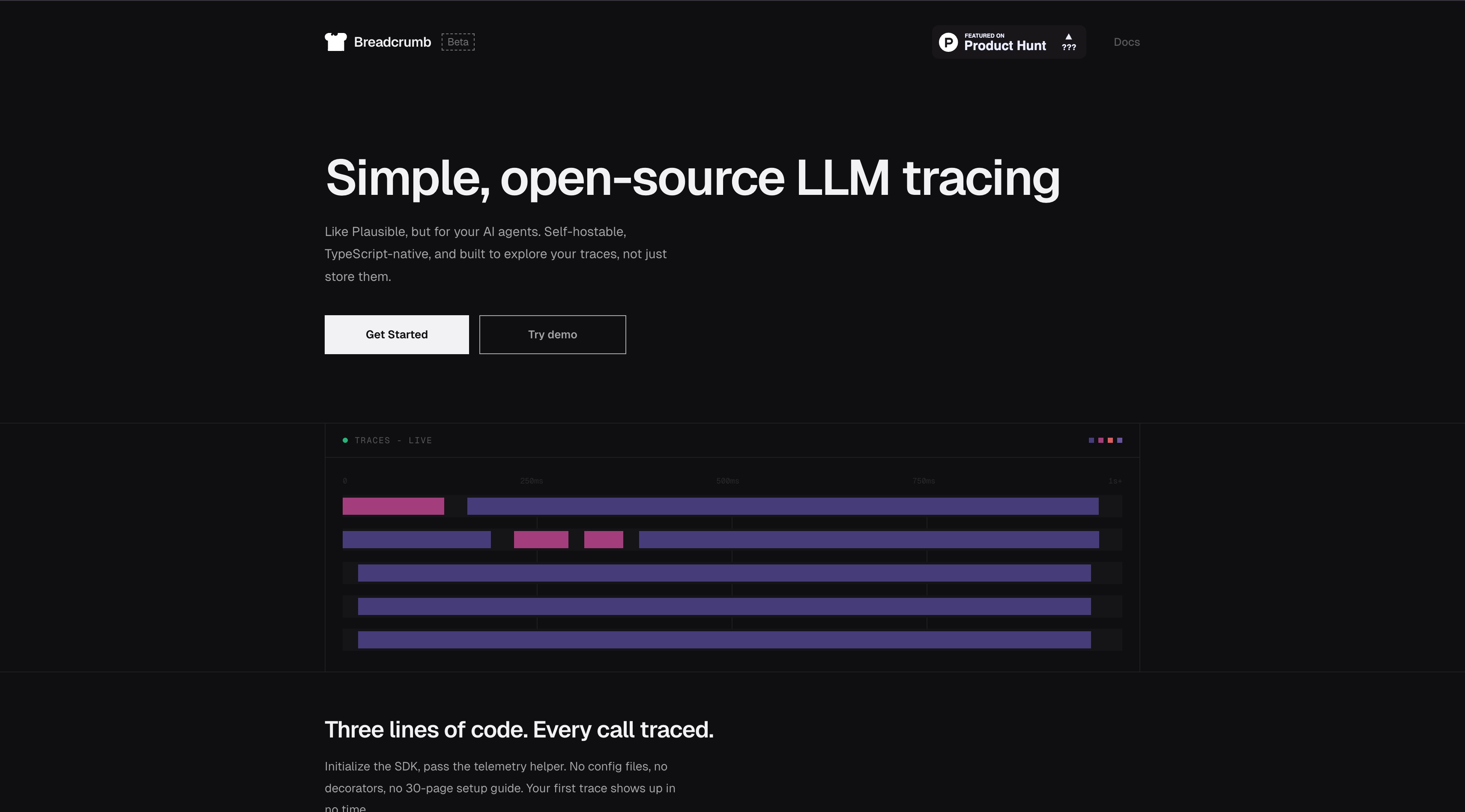

- End-to-end LLM call tracing

- Prompt and completion replay

- Token and cost analytics

- Latency and performance analysis

- Open-source self-hosting

- Fast TypeScript ecosystem integration

Use Cases

- AI agent debugging and troubleshooting

- Model usage cost governance

- Prompt effectiveness optimization

- Production observability setup

- Team-level AI quality monitoring

- LLM app development acceleration

FAQ

Breadcrumb is an LLM tracing tool for AI apps and agents, tracking prompts, completions, latency, token usage, and cost for every request. It is open source, self-hostable, and easy to integrate in TypeScript workflows. Core capabilities include: End-to-end LLM call tracing, Prompt and completion replay, Token and cost analytics.

Common scenarios include: AI agent debugging and troubleshooting, Model usage cost governance, Prompt effectiveness optimization.